2 minute read.3,2,1… it’s ‘lift-off’ for Participation Reports

Metadata is at the heart of all our services. With a growing range of members participating in our community—often compiling or depositing metadata on behalf of each other—the need to educate and express obligations and best practice has increased. In addition, we’ve seen more and more researchers and tools making use of our APIs to harvest, analyze and re-purpose the metadata our members register, so we’ve been very aware of the need to be more explicit about what this metadata enables, why, how, and for whom.

This week we take an important step towards this goal with a much-anticipated announcement: Participation reports are in beta release—so come along and take a look!

What does this mean?

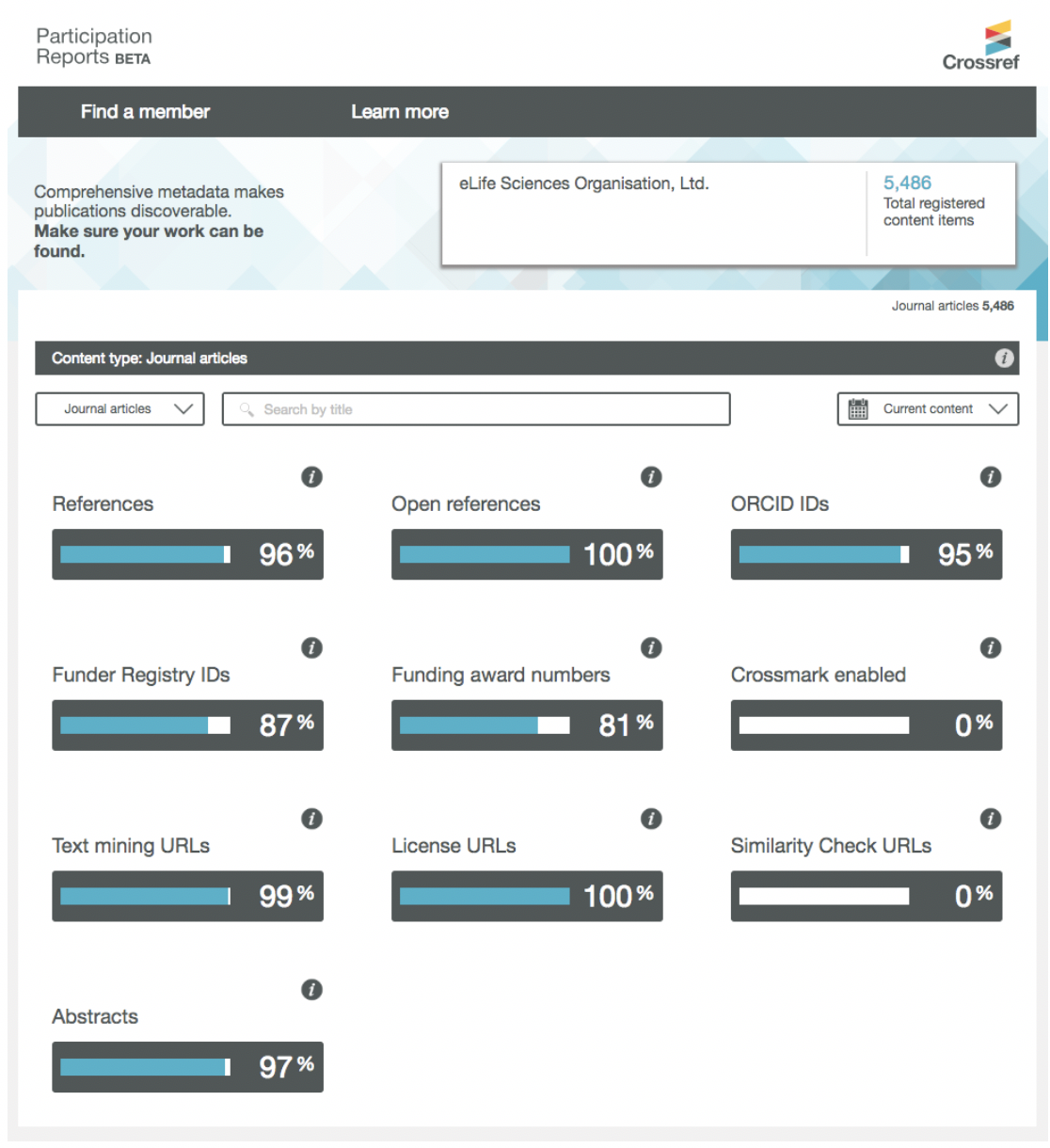

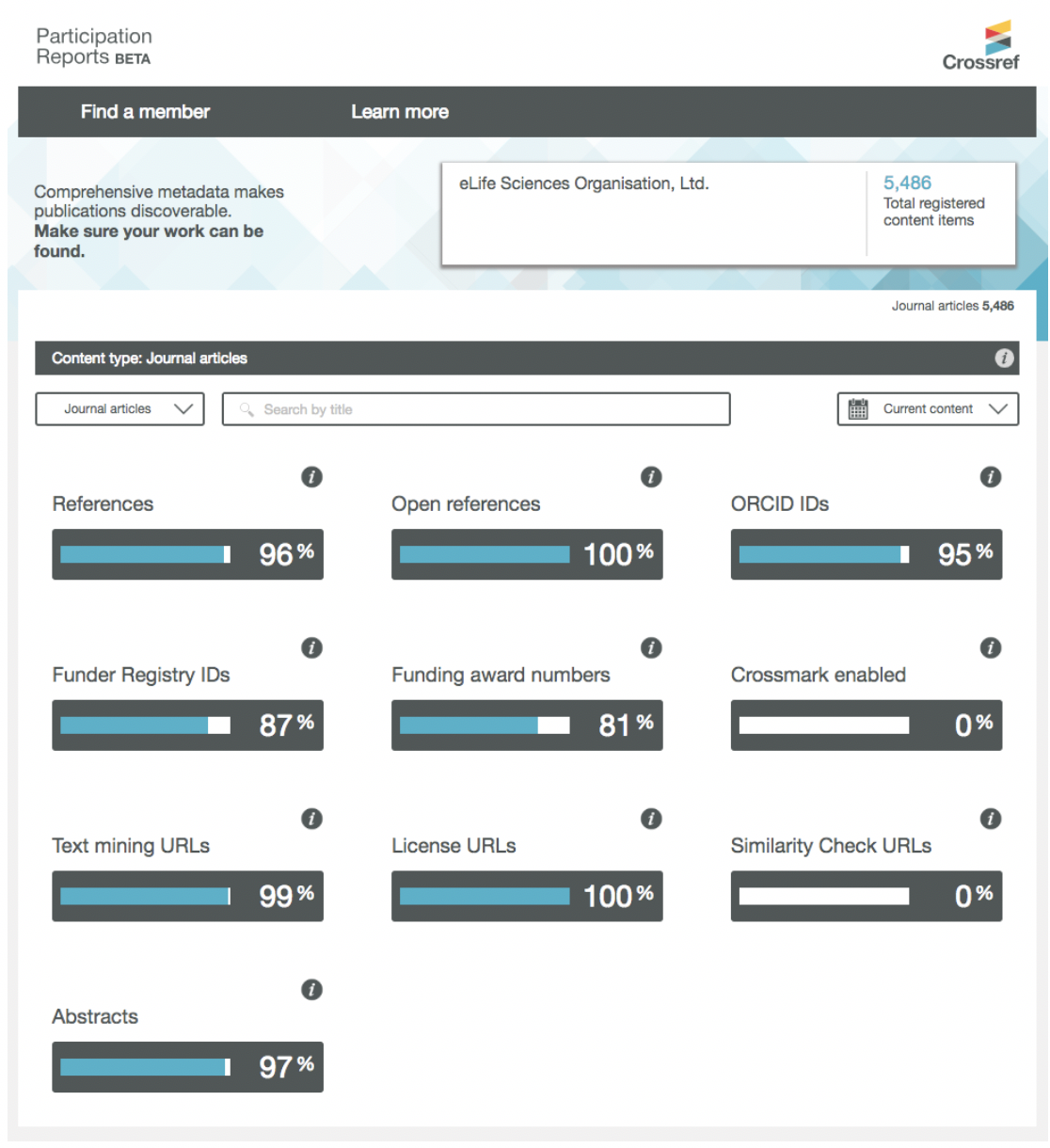

Participation Reports gives—for the first time—a clear visualization of the metadata that Crossref has. Search for any member to find out what percentage of their content includes 10 key elements of information, above and beyond the basic bibliographic metadata that all members are obliged to provide. This includes metadata such as ORCID iDs for contributors, funding acknowledgements, reference lists, and abstracts—richer metadata that makes content more discoverable, and much more useful to the scholarly community as a whole, including among members themselves.

You can filter by content such as journal articles, book chapters, datasets, and preprints, and compare current content (past two calendar years and year-to-date) to back file content (older than that). And within the journal articles view, you can drill down to view the metadata completeness for each individual journal. We hear that editorial boards are keen to see that aspect!

We’re delighted that participation reports are now available in beta. That means that while we are confident that the data shown is accurate, there could be the odd glitch as we monitor use.

Thank you to everyone who has helped us to test the reports and provided so much valuable feedback. We plan to expand and improve participation reports to include additional metadata elements, metadata quality checks, and adherence to Crossref best practice such as DOI display. We’re still listening so do get in touch if you have questions or suggestions, or would like a more detailed walk through. There is also a feedback button right in-situ in the tool.