2026 May 14

From 1990 to today: connecting HFSP's grant history to the research nexus

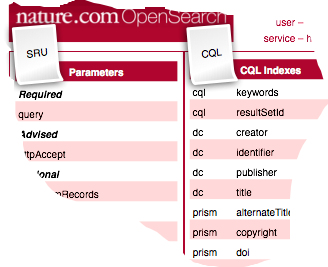

For a funder with over thirty years of funding history, making all of their funding metadata openly available is no small undertaking. In this conversation, I chat with Guntram Bauer, Chief Scientific Officer at the Human Frontiers Science Program (HFSP), about how the organisation is working to register decades of grant data with Crossref, the challenges of linking historical awards to published research outputs, and what open, structured funding metadata means for accountability to member countries and the wider scientific community.